-

Docker搭建ELKF日志分析系统

Docker搭建ELKF日志分析系统

文章目录

资源列表

操作系统 配置 主机名 IP 所需软件 CentOS 7.9 4C8G docker 192.168.93.165 Docker-ce 26.1.2 基础环境

- 关闭防火墙

systemctl stop firewalld systemctl disable firewalld- 关闭内核安全机制

setenforce 0 sed -i "s/^SELINUX=.*/SELINUX=disabled/g" /etc/selinux/config- 修改主机名

hostnamectl set-hostname docker一、系统环境准备

- 基于Docker环境部署ELKF日志分析系统,实现日志分析功能

1.1、创建所需的映射目录

# 根据实际情况做修改 [root@docker ~]# mkdir -p /var/log/elasticsearch [root@docker ~]# chmod -R 777 /var/log/elasticsearch/1.2、修改系统参数

# 定义了一个进程可以拥有的最大内存映射区域数 [root@docker ~]# echo "vm.max_map_count=655360" >> /etc/sysctl.conf [root@docker ~]# sysctl -p vm.max_map_count = 655360 # 配置用户和系统级的资源限制。修改的内容立即生效(严谨) [root@docker ~]# cat >> /etc/security/limits.conf << EOF * soft nofile 65535 * hard nofile 65535 * soft nproc 65535 * hard nproc 63335 * soft memlock unlimited * hard memlock unlimited EOF1.3、单击创建elk-kgc网络桥接

[root@docker ~]# docker network create elk-kgc b8b1b7e36412169d689c39b39b5624c79f8fe0698a3c7b95dc1c78852285644e [root@docker ~]# docker network ls NETWORK ID NAME DRIVER SCOPE e8a2cadd9616 bridge bridge local b8b1b7e36412 elk-kgc bridge local 3566b89c775b host host local a5914394299b none null local二、基于Dockerfile构建Elasticsearch镜像

- 执行步骤如下:

2.1、创建Elasticsearch工作目录

[root@docker ~]# mkdir -p /root/elk/elasticsearch [root@docker ~]# cd /root/elk/elasticsearch/2.2、上传资源到指定工作路径

- 上传Elasticsearch的源码包和Elasticsearch配置文件到/root/elk/elasticsearch目录下,所需文件如下

[root@docker elasticsearch]# ll total 27872 -rw-r--r-- 1 root root 28535876 Jun 6 22:45 elasticsearch-6.1.0.tar.gz -rw-r--r-- 1 root root 3017 Jun 6 22:44 elasticsearch.yml # 配置文件内容yml如下 [root@docker elasticsearch]# cat elasticsearch.yml | grep -v "#" cluster.name: kgc-elk node.name: node-1 path.logs: /var/log/elasticsearch bootstrap.memory_lock: false network.host: 0.0.0.0 http.port: 92002.3、编写Dockerfile文件

[root@docker elasticsearch]# cat Dockerfile FROM centos:7 MAINTAINER wzh@kgc.com RUN yum -y install java-1.8.0-openjdk vim telnet lsof ADD elasticsearch-6.1.0.tar.gz /usr/local/ RUN cd /usr/local/elasticsearch-6.1.0/config RUN mkdir -p /data/behavior/log-node1 RUN mkdir /var/log/elasticsearch COPY elasticsearch.yml /usr/local/elasticsearch-6.1.0/config/ RUN useradd es && chown -R es:es /usr/local/elasticsearch-6.1.0/ RUN chmod +x /usr/local/elasticsearch-6.1.0/bin/* RUN chown -R es:es /var/log/elasticsearch/ RUN chown -R es:es /data/behavior/log-node1/ RUN sed -i "s/-Xms1g/-Xms2g/g" /usr/local/elasticsearch-6.1.0/config/jvm.options RUN sed -i "s/-Xmx1g/-Xmx2g/g" /usr/local/elasticsearch-6.1.0/config/jvm.options EXPOSE 9200 EXPOSE 9300 CMD su es /usr/local/elasticsearch-6.1.0/bin/elasticsearch2.4、构建Elasticsearch镜像

[root@docker elasticsearch]# docker build -t elasticsearch .三、基于Dockerfile构建Kibana镜像

- 执行步骤如下:

3.1、创建Kibana工作目录

[root@docker ~]# mkdir -p /root/elk/kibana3.2、上传资源到指定工作目录

- 上传kibana的源码包到/root/elk/kibana目录下

[root@docker ~]# ll /root/elk/kibana/ total 64404 -rw-r--r-- 1 root root 65947685 Jun 6 23:09 kibana-6.1.0-linux-x86_64.tar.gz3.3、编写Dockerfile文件

[root@docker ~]# cd /root/elk/kibana/ [root@docker kibana]# cat Dockerfile FROM centos:7 MAINTAINER wzh@kgc.com RUN yum -y install java-1.8.0-openjdk vim telnet lsof ADD kibana-6.1.0-linux-x86_64.tar.gz /usr/local/ RUN cd /usr/local/kibana-6.1.0-linux-x86_64 RUN sed -i "s/#server.name: \"your-hostname\"/server.name: "kibana-hostname"/g" /usr/local/kibana-6.1.0-linux-x86_64/config/kibana.yml RUN sed -i "s/#server.port: 5601/server.port: \"5601\"/g" /usr/local/kibana-6.1.0-linux-x86_64/config/kibana.yml RUN sed -i "s/#server.host: \"localhost\"/server.host: \"0.0.0.0\"/g" /usr/local/kibana-6.1.0-linux-x86_64/config/kibana.yml RUN sed -ri '/elasticsearch.url/ s/^#|"//g' /usr/local/kibana-6.1.0-linux-x86_64/config/kibana.yml RUN sed -i "s/localhost:9200/elasticsearch:9200/g" /usr/local/kibana-6.1.0-linux-x86_64/config/kibana.yml EXPOSE 5601 CMD ["/usr/local/kibana-6.1.0-linux-x86_64/bin/kibana"]3.4、构建Kibana镜像

[root@docker kibana]# docker build -t kibana .四、基于Dockerfile构建Logstash镜像

- 执行步骤如下

4.1、创建Logstash工作目录

[root@docker ~]# mkdir -p /root/elk/logstash4.2、编写Dockerfile文件

[root@docker ~]# cd /root/elk/logstash/ [root@docker logstash]# cat Dockerfile FROM centos:7 MAINTAINER wzh@kgc.com RUN yum -y install java-1.8.0-openjdk vim telnet lsof ADD logstash-6.1.0.tar.gz /usr/local/ RUN cd /usr/local/logstash-6.1.0/ ADD run.sh /run.sh RUN chmod 755 /*.sh EXPOSE 5044 CMD ["/run.sh"]4.3、创建CMD运行的脚本文件

[root@docker logstash]# cat run.sh #!/bin/bash /usr/local/logstash-6.1.0/bin/logstash -f /opt/logstash/conf/nginx-log.conf4.4、上传资源到指定工作目录

- 上传logstash的源码包到/root/elk/logstash目录下,所需文件如下

[root@docker logstash]# ll total 107152 -rw-r--r-- 1 root root 230 Jun 7 00:41 Dockerfile -rw-r--r-- 1 root root 109714065 Jun 7 00:40 logstash-6.1.0.tar.gz -rw-r--r-- 1 root root 88 Jun 7 00:42 run.sh4.5、构建Logstash镜像

[root@docker logstash]# docker build -t logstash .4.6、logstash配置文件详解

-

logstash功能非常强大,不仅仅是分析传入的文本,还可以作监控与告警之用。现在介绍logstash的配置文件其使用经验

-

logstash默认的配置文件不需要修改,只需要启动的时候指定一个配置文件即可!比如run.sh脚本中指定/opt/logstash/conf/nginx-log.conf。注意:文件包含了input、filter、output三部分,其中filter不是必须的

[root@docker ~]# mkdir -p /opt/logstash/conf [root@docker ~]# vim /opt/logstash/conf/nginx-log.conf input { beats { port => 5044 } } filter { if "www-bdqn-cn-pro-access" in [tags] { grok { match => {"message" => '%{QS:agent} \"%{IPORHOST:http_x_forwarded_for}\" - \[%{HTTPDATE:timestamp}\] \"(?:%{WORD:verb} %{NOTSPACE:request}(?: HTTP/%{NUMBER:http_version})?|-)\" %{NUMBER:response} %{NUMBER:bytes} %{QS:referrer} %{IPORHOST:remote_addr}:%{POSINT:port} %{NUMBER:remote_addr_response} %{BASE16FLOAT:request_time}'} } } urldecode {all_fields => true} date { match => [ "timestamp" , "dd/MMM/YYYY:HH:mm:ss Z" ] } useragent { source => "agent" target => "ua" } } output { if "www-bdqn-cn-pro-access" in [tags] { elasticsearch { hosts => ["elasticsearch:9200"] manage_template => false index => "www-bdqn-cn-pro-access-%{+YYYY.MM.dd}" } } } # 注意:使用nginx-log.conf文件拷贝时,match最长的一行自动换行问题4.6.1、关于filter部分

- 输入和输出在logstash配置中是很简单的一步,而对数据进行匹配过滤处理显得复杂。匹配当行日志是入门水平需要掌握的,而多行甚至不规则的日志则需要ruby的协助。本例主要展示grok插件

- 以下是某生产环境nginx的access日志格式

log_format main '"$http_user_agent""$http_x_forwarded_for" ' '$remote_user [$time_local] "$request" ' '$status $body_bytes_sent "$http_referer" ' '$upstream_addr $upstream_status $upstream_response_time';- 下面是对应上述nginx日志格式的grok捕获语法

'%{QS:agent} \"%{IPORHOST:http_x_forwarded_for}\" - \[%{HTTPDATE:timestamp}\] \"(?:%{WORD:verb} %{NOTSPACE:request}(?: HTTP/%{NUMBER:http_version})?|- )\"%{NUMBER:response}%{NUMBER:bytes}%{QS:referrer}%{IP ORHOST:remote_addr}:%{POSINT:port} %{NUMBER:remote_addr_response}%{BASE16FLOAT:request_ time}'在filter段内的第一行是判断语句,如果www-bdqn-cn-pro-access自定义字符在tags内,则使用grok段内的语句对日志进行处理

- geopi:使用GeoIP数据库对client_ip字段的IP地址进行解析,可得出该IP的经纬度、国家与城市等信息,但精确度不高,这主要依赖于GeoIP数据库

- date:默认情况下,Elasticsearch内记录的date字段是Elasticsearch接收到该日志的时间,但在实际应用中需要修改为日志中所记录的时间。这时,需要指定记录时间的字段并指定时间格式。如果匹配成功,则会将日志的时间替换至date字段中

- useragent:主要为webapp提供的解析,可以解析目前常见的一些useragent

4.6.2、关于output部分

- logstash可以在上层配置一个负载调度器实现群集。在实际应用中,logstash服务需要处理多种不同类型的日志或数据。处理后的日志或数据需要存放在不同的Elasticsearch群集或索引中,需要对日志进行分类

output { if "www-bdqn-cn-pro-access" in [tags] { elasticsearch { hosts => ["elasticsearch:9200"] manage_template => false index => "www-bdqn-cn-pro-access-%{+YYYY.MM.dd}" } } }通过在output配置中设定判断语句,将处理后的数据存放到不同的索引中。而这个tags的添加有以下三种途径:

- 在Filebeat读取数据后,向logstash发送前添加到数据中

- logstash处理日志的时候,向tags标签添加自定义内容

- 在logstash接收传入数据时,向tags标签添加自定义内容

从上面的输入配置文件中可以看出,这里来采用的第一种图形,在Filebeat读取数据后,向logstash发送数据前添加www-bdqn-cn-pro-access的tag

这个操作除非在后续处理数据的时候手动将其删除,否则将永久存在该数据中

Elasticsearch字段的各参数意义如下:

- hosts:指定Elasticsearch地址,如有多个节点可用,可以设置为array模式,可实现负载均衡

- manage_template:如果该索引没有合适的模板可用,默认情况下将由默认的模板进行管理

- index:只当存储数据的索引

五、基于Dockerfile构建Filebeat镜像

- 执行步骤如下:

5.1、创建Filebeat工作目录

[root@docker ~]# mkdir -p /root/elk/Filebeat5.2、编写Dockerfile文件

[root@docker ~]# cd /root/elk/Filebeat/ [root@docker Filebeat]# cat Dockerfile FROM centos:7 MAINTAINER wzh@kgc.com ADD filebeat-6.1.0-linux-x86_64.tar.gz /usr/local/ RUN cd /usr/local/filebeat-6.1.0-linux-x86_64 RUN mv /usr/local/filebeat-6.1.0-linux-x86_64/filebeat.yml /root COPY filebeat.yml /usr/local/filebeat-6.1.0-linux-x86_64/ ADD run.sh /run.sh RUN chmod 755 /*.sh CMD ["/run.sh"]5.3、创建CMD运行的脚本文件

[root@docker Filebeat]# cat run.sh #!/bin/bash /usr/local/filebeat-6.1.0-linux-x86_64/filebeat -e -c /usr/local/filebeat-6.1.0-linux-x86_64/filebeat.yml5.4、上传资源到指定工作目录

- 上传Filebeat的源码包和Filebeat配置文件到/root/elk/filebeat目录下,所需文件如下

[root@docker Filebeat]# ll total 11660 -rw-r--r-- 1 root root 312 Jun 7 01:11 Dockerfile -rw-r--r-- 1 root root 11926942 Jun 7 01:09 filebeat-6.1.0-linux-x86_64.tar.gz -rw-r--r-- 1 root root 186 Jun 7 01:14 filebeat.yml -rw-r--r-- 1 root root 118 Jun 7 01:12 run.sh5.5、构建Filebeat镜像

[root@docker Filebeat]# docker build -t filebeat .5.6、Filebeat.yml文件详解

- Filebeat配置我呢见详解查看Filebeat的配置文件

[root@docker Filebeat]# cat filebeat.yml filebeat.prospectors: - input_type: log paths: - /var/log/nginx/www.bdqn.cn-access.log tags: www-bdqn-cn-pro-access clean_*: true output.logstash: hosts: ["logstash:5044"] # 每个Filebeat可以根据需求的不同拥有一个或多个prospectors。其他配置信息含义如下: 1、input_type:输入的内容,主要为逐行读取的log格式与标准输入stdin 2、paths:指定需要读取的日志的路径,如果路径拥有相同的结构,则可以使用通配符 3、tags:为该路径的日志添加自定义tags 4、clean_:Filebeat在/var/lib/filebeat/registry下有个注册表文件,它记录着Filebeat读取过的文件,还有已经读取的行数等信息。如果日志文件是定时分割,而且数量会随之增加,那么该注册表文件也会慢慢增大。随着注册表的增大,会导致Filebeat检索的性能下降 5、output.logstash:定义内容输出的路径,这里主要输出到Elasticsearch 6、hosts:只当服务地址六、启动Nginx容器作为日志输入源

- 使用docker run命令启动一个nginx容器

[root@docker ~]# docker run -itd -p 80:80 --network elk-kgc -v /var/log/nginx:/var/log/nginx --name nginx-elk nginx:latest- 本地目录/var/log/nginx必须挂载到Filebeat容器中,让Filebeat可以采集到日目录

- 手动模拟生产环境几条日志文件作为nignx容器所产生的站点日志,同样注意拷贝的时候换行问题

[root@docker nginx]# cat www.bdqn.cn-access.log "YisouSpider" "106.11.155.156" - [18/Jul/2020:00:00:13 +0800] "GET /applier/position?gwid=17728&qyid=122257 HTTP/1.0" 200 9197 "-" 192.168.10.131:80 2000.032 "-""162.209.213.146" - [18/Jul/2020:00:02:11 +0800] "GET //tag/7764.shtml HTTP/1.0" 200 24922 "-" 192.168.10.131:80 200 0.074 "YisouSpider" "106.11.152.248" - [18/Jul/2020:00:07:44+0800] "GET /news/201712/21424.shtml HTTP/1.0" 200 8821 "-" 192.168.10.131:80 2000.097 "YisouSpider" "106.11.158.233" - [18/Jul/2020:00:07:44+0800] "GET /news/201301/7672.shtml HTTP/1.0" 200 8666 "-" 192.168.10.131:80 2000.111 "YisouSpider" "106.11.159.250" - [18/Jul/2020:00:07:44+0800] "GET /news/info/id/7312.html HTTP/1.0" 200 6617 "-" 192.168.10.131:80 2000.339 "Mozilla/5.0 (compatible;SemrushBot/2~bl;+http://www.semrush.com/bot.html)" "46.229.168.83" - [18/Jul/2020:00:08:57+0800] "GET /tag/1134.shtml HTTP/1.0"2006030"-"192.168.10.131:80 200 0.079七、启动Filebeat+ELK日志收集环境

- 注意启动顺序和查看启动日志

7.1、启动Elasticsearch

[root@docker ~]# docker run -itd -p 9200:9200 -p 9300:9300 --network elk-kgc -v /var/log/elasticsearch:/var/log/elasticsearch --name elasticsearch elasticsearch7.2、启动Kibana

[root@docker ~]# docker run -itd -p 5601:5601 --network elk-kgc --name kibana kibana:latest7.3、启动Logstash

[root@docker ~]# docker run -itd -p 5044:5044 --network elk-kgc -v /opt/logstash/conf:/opt/logstash/conf --name logstash logstash:latest7.4、启动Filebeat

[root@docker ~]# docker run -itd --network elk-kgc -v /var/log/nginx:/var/log/nginx --name filebeat filebeat:latest八、Kibana Web管理

- 因为kibana的数据需要从Elasticsearch中读取,所以需要Elasticsearch中有数据才能创建索引,创建不同的索引区分不同的数据集

8.1、访问Kibana

-

浏览器输入http://192.168.93.165:5601访问kibana控制台。在Management中找到Indexpatterns,单击进去可以看到类似以下图片中的界面,填写www-bdqn-cn-pro-access-*

-

在TimeFilterfieldname选项框中选中@timestemp这个选项。在kibana中,默认通过时间来排序。如果将日志存放入Elasticsearch的时候没有指定@timestamp字段内容,则Elasticsearch会分配接收到的日志时的时间作为该日志@timestamp的值

-

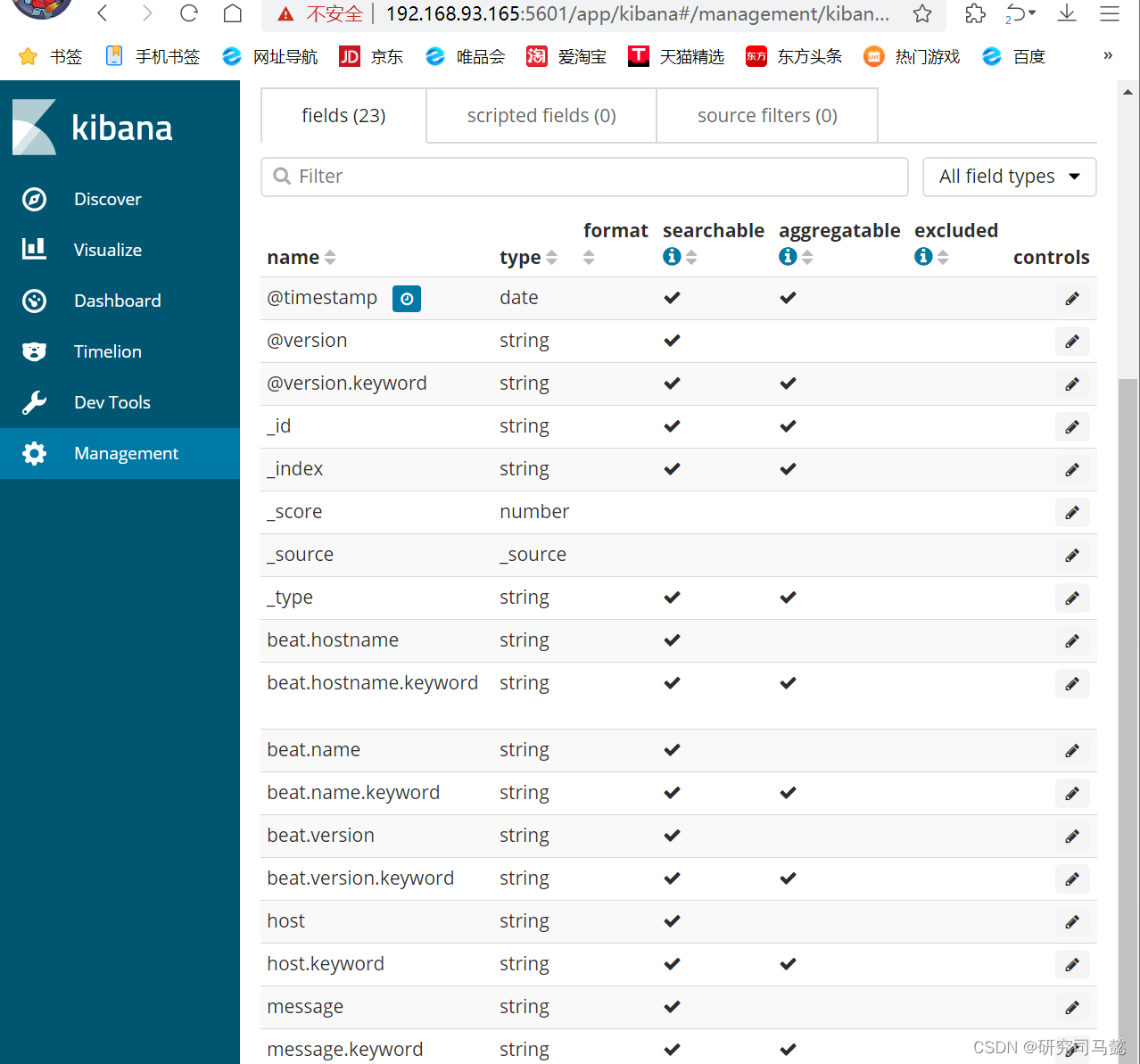

单击**“Createindexpattern”**按钮,创建www-bdqn-cn-pro-access索引后界面效果如下

-

单击“Discover”标签,可能会看不到数据。需要将时间轴选中为“Thisyear”才可以看到的内容

九、Kibana图示分析

- 打开kibana的管理姐买你,单击“visualize”标签——Create a visualization,选择饼状图pie,添加索引www-bdqn-cn-por-access-*,点开SplitSlices,选中Terms,再从FieId选中messagekeyword,最后点击上面三角按钮即可生成可访问最多的5个公网IP地址

-

相关阅读:

【Java系列】JDK 1.8 新特性之 Lambda表达式

前端工程化01-复习jQuery当中的AJAX

通过Fuseki进行三元组数据的新增、删除和查询

Qt xml学习之calculator-qml

面试 | 互联网大厂测试开发岗位会问哪些问题?

MybatisPlus学习

Go-知识map

力扣 -- 132. 分割回文串 II

Apache Paimon实时数据糊介绍

Gitee仓库介绍和项目纳入版本管理+ignore文件配置

- 原文地址:https://blog.csdn.net/weixin_73059729/article/details/139533283