-

Apache Griffin

Griffin User Guide: https://griffin.apache.org/docs/quickstart-cn.html.

Griffin deploy Doc: https://github.com/apache/griffin/blob/master/griffin-doc/ui/user-guide.md.

在一秒钟内看到本质的人和花半辈子也看不清一件事本质的人,自然是不一样的命运。

1.Introduction

Document: https://griffin.apache.org/docs/quickstart-cn.html.

在Griffin的架构中,主要分为Define、Measure和Analyze三个部分

Define:主要负责定义数据质量统计的维度,比如数据质量统计的时间跨度、统计的目标(源端和目标端的数据数量是否一致,数据源里某一字段的非空的数量、不重复值的数量、最大值、最小值、top5的值数量等)Measure:主要负责执行统计任务,生成统计结果Analyze:主要负责保存与展示统计结果

1.1 特性

- 度量:精确度、完整性、及时性、唯一性、有效性、一致性。

- 异常监测:利用预先设定的规则,检测出不符合预期的数据,提供不符合规则数据的下载。

- 异常告警:通过邮件或门户报告数据质量问题。

- 可视化监测:利用控制面板来展现数据质量的状态。

- 实时性:可以实时进行数据质量检测,能够及时发现问题。

- 可扩展性:可用于多个数据系统仓库的数据校验。

- 可伸缩性:工作在大数据量的环境中,目前运行的数据量约1.2PB(eBay环境)。

- 自助服务:Griffin提供了一个简洁易用的用户界面,可以管理数据资产和数据质量规则;同时用户可以通过控制面板查看数据质量结果和自定义显示内容。

1.2 数据质量指标说明

- 精确度:度量数据是否与指定的目标值匹配,如金额的校验,校验成功的记录与总记录数的比值。

- 完整性:度量数据是否缺失,包括记录数缺失、字段缺失,属性缺失。

- 及时性:度量数据达到指定目标的时效性。

- 唯一性:度量数据记录是否重复,属性是否重复;常见为度量为hive表主键值是否重复。

- 有效性:度量数据是否符合约定的类型、格式和数据范围等规则。

- 一致性:度量数据是否符合业务逻辑,针对记录间的逻辑的校验,如:pv一定是大于uv的,订单金额加上各种优惠之后的价格一定是大于等于0的。

2.Install

2.1 Create Directory

mkdir /home/xxxx/software

mkdir /home/xxxx/software/data

sudo ln -s /home/xxxx/software /apache

sudo ln -s /apache/data /data

mkdir /apache/tmp

mkdir /apache/tmp/hive2.2 Install Openjdk

- Openjdk Website: https://jdk.java.net/java-se-ri/8-MR3.

- 下载并上传到software

tar -zxvf openjdk-8u41-b04-linux-x64-14_jul_2022.tar.gz

mv openjdk-8xxxx open_jdk - 修改配置文件

sudo vim /etc/profile

export JAVA_HOME=/home/xxxx/software/open_jdk export PATH=$PATH:$JAVA_HOME/bin- 1

- 2

- 保存配置,查看版本

source /etc/profile

java -version

2.3 Install Mysql

Mysql — linux install: https://blog.csdn.net/weixin_43916074/article/details/125284109.

2.4 Install npm

sudo yum install nodejs

sudo yum install npm

node -v

npm -v2.5 Install Hadoop

- Hadoop Website: https://hadoop.apache.org/releases.html.

- 下载并上传到software

tar -zxvf hadoop-2.10.2.tar.gz

sudo mv hadoop-2.10.2 hadoop - 修改配置

sudo vim /etc/profile

export HADOOP_HOME=/home/xxx/software/hadoop export HADOOP_COMMON_HOME=/home/xxx/software/hadoop export HADOOP_COMMON_LIB_NATIVE_DIR=/home/xxx/software/hadoop/lib/native export HADOOP_HDFS_HOME=/home/xxx/software/hadoop export HADOOP_INSTALL=/home/xxx/software/hadoop export HADOOP_MAPRED_HOME=/home/xxx/software/hadoop export HADOOP_USER_CLASSPATH_FIRST=true export HADOOP_CONF_DIR=$HADOOP_HOME/etc/hadoop export PATH=$PATH:$HADOOP_HOME/bin- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 保存配置,查看版本

source /etc/profile

hadoop version

2.6 Install Hive

- 版本对应

Hive Website: https://hive.apache.org/.

- 下载上传到softeare

tar -xzvf apache-hive-2.3.9-bin.tar.gz

sudo mv apache-hive-2.3.9-bin hive - 修改配置

sudo vim /etc/profile

export HIVE_HOME=/home/xxx/software/hive export PATH=$PATH:$HIVE_HOME/bin- 1

- 2

- 保存配置,查看版本

source /etc/profile

hive --version

2.7 Install Scala

- Scala Website: https://www.scala-lang.org/download/2.13.8.html.

Download: https://downloads.lightbend.com/scala/2.13.8/scala-2.13.8.tgz.

- 下载并上传到software

tar -zxvf scala-2.13.8.tgz

sudo mv scala-2.13.8 scala - 修改配置

sudo vim /etc/profile

export SCALA_HOME=/home/xxxx/software/scala export CLASSPATH=$SCALA_HOME/lib/ export PATH=$PATH:$SCALA_HOME/bin- 1

- 2

- 3

- 保存配置,查看版本

source /etc/profile

scala -version

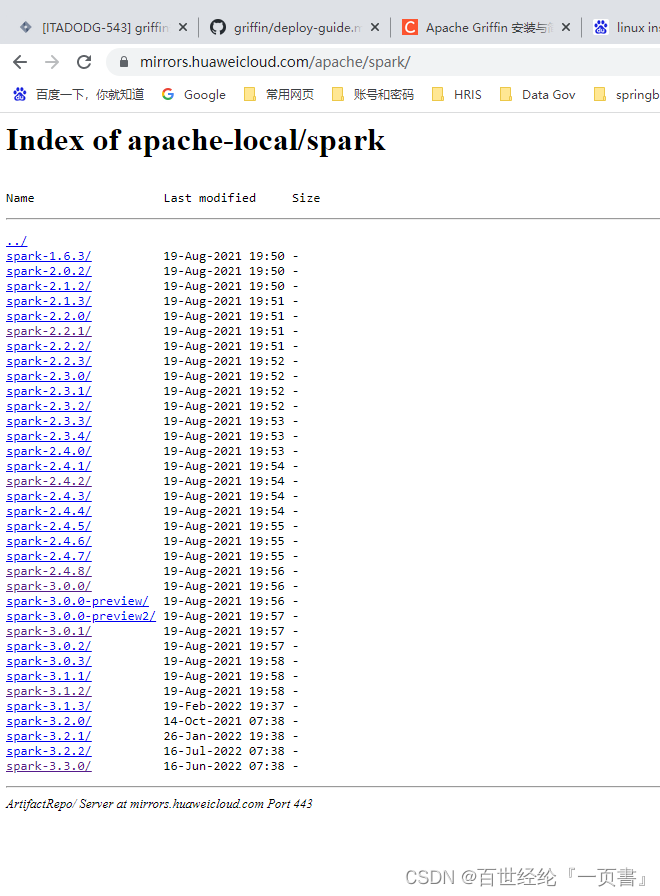

2.8 Install Spark

- Spark Website: https://spark.apache.org/downloads.html.

- Spark Download: https://mirrors.huaweicloud.com/apache/spark/.

- 下载并上传到software

tar -zxvf spark-2.2.1-bin-without-hadoop.tgz

sudo mv spark-2.2.1-bin-without-hadoop spark - 修改配置

sudo vim /etc/profile

export SPARK_HOME=/home/xxx/software/spark export PATH=$PATH:$SPARK_HOME/bin- 1

- 2

- 保存配置,查看版本

source /etc/profile

2.9 Install Elasticsearch

- ES Website: https://www.elastic.co/cn/downloads/elasticsearch.

- 下载并上传到software

unzip elasticsearch-5.6.16.zip

mv elasticsearch-5.6.16 elastic - 修改配置,加入下面的内容

vi /elastic/config/elasticsearch.yml

network.host: 127.0.0.1 http.cors.enabled: true http.cors.allow-origin: '\*'- 1

- 2

- 3

- 启动

vi ~/software/elastic/bin

./elasticsearch

- 另起窗口访问自己

curl 127.0.0.1:9200

2.10 Install Livy

- Livy Download: http://mirrors.cloud.tencent.com/apache/incubator/livy/0.7.1-incubating/.

- 下载并上传到software

unzip apache-livy-0.7.1-incubating-bin.zip

mv apache-livy-0.7.1-incubating-bin livy - 修改配置

sudo vim /etc/profile

export LIVY_HOME=/home/os-nan.zhao/software/livy export PATH=$PATH:$LIVY_HOME/bin- 1

- 2

- 保存配置,查看版本

source /etc/profile

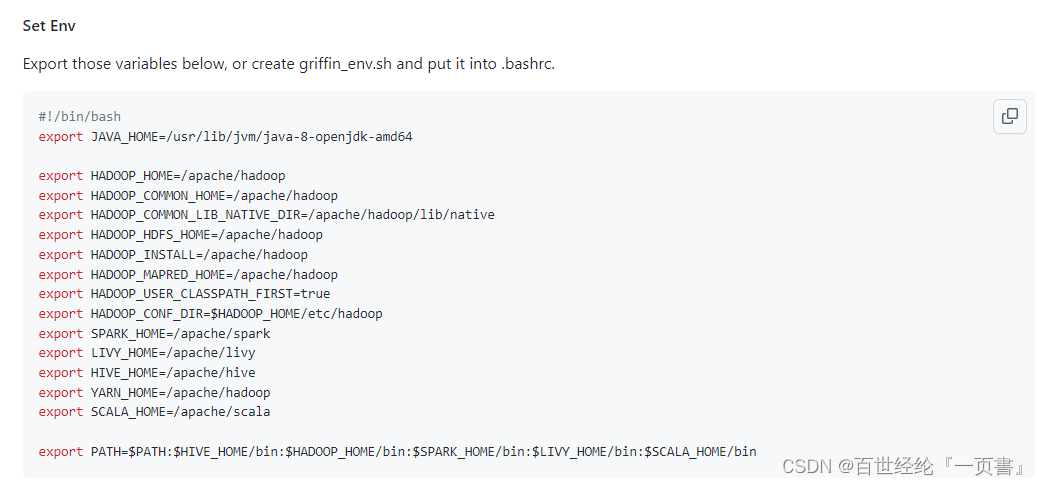

官方是一口气就全都配好了

3.User Guide

User Guide: https://github.com/apache/griffin/blob/master/griffin-doc/deploy/deploy-guide.md.

3.1 config

Mysql

- 修改密码

ALTER USER ‘root’@‘localhost’ IDENTIFIED BY ‘DataGov@123’; - 登录,创建DB

create database quartz - 写入数据,要先exit

mysql -u root -p quartz < Init_quartz_mysql_innodb.sql

-- Licensed to the Apache Software Foundation (ASF) under one -- or more contributor license agreements. See the NOTICE file -- distributed with this work for additional information -- regarding copyright ownership. The ASF licenses this file -- to you under the Apache License, Version 2.0 (the -- "License"); you may not use this file except in compliance -- with the License. You may obtain a copy of the License at -- -- http://www.apache.org/licenses/LICENSE-2.0 -- -- Unless required by applicable law or agreed to in writing, -- software distributed under the License is distributed on an -- "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY -- KIND, either express or implied. See the License for the -- specific language governing permissions and limitations -- under the License. -- In your Quartz properties file, you'll need to set -- org.quartz.jobStore.driverDelegateClass = org.quartz.impl.jdbcjobstore.PostgreSQLDelegate DROP TABLE IF EXISTS qrtz_fired_triggers; DROP TABLE IF EXISTS qrtz_paused_trigger_grps; DROP TABLE IF EXISTS qrtz_scheduler_state; DROP TABLE IF EXISTS qrtz_locks; DROP TABLE IF EXISTS qrtz_simple_triggers; DROP TABLE IF EXISTS qrtz_cron_triggers; DROP TABLE IF EXISTS qrtz_simprop_triggers; DROP TABLE IF EXISTS qrtz_blob_triggers; DROP TABLE IF EXISTS qrtz_triggers; DROP TABLE IF EXISTS qrtz_job_details; DROP TABLE IF EXISTS qrtz_calendars; CREATE TABLE qrtz_job_details ( SCHED_NAME VARCHAR(120) NOT NULL, JOB_NAME VARCHAR(200) NOT NULL, JOB_GROUP VARCHAR(200) NOT NULL, DESCRIPTION VARCHAR(250) NULL, JOB_CLASS_NAME VARCHAR(250) NOT NULL, IS_DURABLE BOOL NOT NULL, IS_NONCONCURRENT BOOL NOT NULL, IS_UPDATE_DATA BOOL NOT NULL, REQUESTS_RECOVERY BOOL NOT NULL, JOB_DATA BYTEA NULL, PRIMARY KEY (SCHED_NAME,JOB_NAME,JOB_GROUP) ); CREATE TABLE qrtz_triggers ( SCHED_NAME VARCHAR(120) NOT NULL, TRIGGER_NAME VARCHAR(200) NOT NULL, TRIGGER_GROUP VARCHAR(200) NOT NULL, JOB_NAME VARCHAR(200) NOT NULL, JOB_GROUP VARCHAR(200) NOT NULL, DESCRIPTION VARCHAR(250) NULL, NEXT_FIRE_TIME BIGINT NULL, PREV_FIRE_TIME BIGINT NULL, PRIORITY INTEGER NULL, TRIGGER_STATE VARCHAR(16) NOT NULL, TRIGGER_TYPE VARCHAR(8) NOT NULL, START_TIME BIGINT NOT NULL, END_TIME BIGINT NULL, CALENDAR_NAME VARCHAR(200) NULL, MISFIRE_INSTR SMALLINT NULL, JOB_DATA BYTEA NULL, PRIMARY KEY (SCHED_NAME,TRIGGER_NAME,TRIGGER_GROUP), FOREIGN KEY (SCHED_NAME,JOB_NAME,JOB_GROUP) REFERENCES QRTZ_JOB_DETAILS(SCHED_NAME,JOB_NAME,JOB_GROUP) ); CREATE TABLE qrtz_simple_triggers ( SCHED_NAME VARCHAR(120) NOT NULL, TRIGGER_NAME VARCHAR(200) NOT NULL, TRIGGER_GROUP VARCHAR(200) NOT NULL, REPEAT_COUNT BIGINT NOT NULL, REPEAT_INTERVAL BIGINT NOT NULL, TIMES_TRIGGERED BIGINT NOT NULL, PRIMARY KEY (SCHED_NAME,TRIGGER_NAME,TRIGGER_GROUP), FOREIGN KEY (SCHED_NAME,TRIGGER_NAME,TRIGGER_GROUP) REFERENCES QRTZ_TRIGGERS(SCHED_NAME,TRIGGER_NAME,TRIGGER_GROUP) ); CREATE TABLE qrtz_cron_triggers ( SCHED_NAME VARCHAR(120) NOT NULL, TRIGGER_NAME VARCHAR(200) NOT NULL, TRIGGER_GROUP VARCHAR(200) NOT NULL, CRON_EXPRESSION VARCHAR(120) NOT NULL, TIME_ZONE_ID VARCHAR(80), PRIMARY KEY (SCHED_NAME,TRIGGER_NAME,TRIGGER_GROUP), FOREIGN KEY (SCHED_NAME,TRIGGER_NAME,TRIGGER_GROUP) REFERENCES QRTZ_TRIGGERS(SCHED_NAME,TRIGGER_NAME,TRIGGER_GROUP) ); CREATE TABLE qrtz_simprop_triggers ( SCHED_NAME VARCHAR(120) NOT NULL, TRIGGER_NAME VARCHAR(200) NOT NULL, TRIGGER_GROUP VARCHAR(200) NOT NULL, STR_PROP_1 VARCHAR(512) NULL, STR_PROP_2 VARCHAR(512) NULL, STR_PROP_3 VARCHAR(512) NULL, INT_PROP_1 INT NULL, INT_PROP_2 INT NULL, LONG_PROP_1 BIGINT NULL, LONG_PROP_2 BIGINT NULL, DEC_PROP_1 NUMERIC(13,4) NULL, DEC_PROP_2 NUMERIC(13,4) NULL, BOOL_PROP_1 BOOL NULL, BOOL_PROP_2 BOOL NULL, PRIMARY KEY (SCHED_NAME,TRIGGER_NAME,TRIGGER_GROUP), FOREIGN KEY (SCHED_NAME,TRIGGER_NAME,TRIGGER_GROUP) REFERENCES QRTZ_TRIGGERS(SCHED_NAME,TRIGGER_NAME,TRIGGER_GROUP) ); CREATE TABLE qrtz_blob_triggers ( SCHED_NAME VARCHAR(120) NOT NULL, TRIGGER_NAME VARCHAR(200) NOT NULL, TRIGGER_GROUP VARCHAR(200) NOT NULL, BLOB_DATA BYTEA NULL, PRIMARY KEY (SCHED_NAME,TRIGGER_NAME,TRIGGER_GROUP), FOREIGN KEY (SCHED_NAME,TRIGGER_NAME,TRIGGER_GROUP) REFERENCES QRTZ_TRIGGERS(SCHED_NAME,TRIGGER_NAME,TRIGGER_GROUP) ); CREATE TABLE qrtz_calendars ( SCHED_NAME VARCHAR(120) NOT NULL, CALENDAR_NAME VARCHAR(200) NOT NULL, CALENDAR BYTEA NOT NULL, PRIMARY KEY (SCHED_NAME,CALENDAR_NAME) ); CREATE TABLE qrtz_paused_trigger_grps ( SCHED_NAME VARCHAR(120) NOT NULL, TRIGGER_GROUP VARCHAR(200) NOT NULL, PRIMARY KEY (SCHED_NAME,TRIGGER_GROUP) ); CREATE TABLE qrtz_fired_triggers ( SCHED_NAME VARCHAR(120) NOT NULL, ENTRY_ID VARCHAR(95) NOT NULL, TRIGGER_NAME VARCHAR(200) NOT NULL, TRIGGER_GROUP VARCHAR(200) NOT NULL, INSTANCE_NAME VARCHAR(200) NOT NULL, FIRED_TIME BIGINT NOT NULL, SCHED_TIME BIGINT NOT NULL, PRIORITY INTEGER NOT NULL, STATE VARCHAR(16) NOT NULL, JOB_NAME VARCHAR(200) NULL, JOB_GROUP VARCHAR(200) NULL, IS_NONCONCURRENT BOOL NULL, REQUESTS_RECOVERY BOOL NULL, PRIMARY KEY (SCHED_NAME,ENTRY_ID) ); CREATE TABLE qrtz_scheduler_state ( SCHED_NAME VARCHAR(120) NOT NULL, INSTANCE_NAME VARCHAR(200) NOT NULL, LAST_CHECKIN_TIME BIGINT NOT NULL, CHECKIN_INTERVAL BIGINT NOT NULL, PRIMARY KEY (SCHED_NAME,INSTANCE_NAME) ); CREATE TABLE qrtz_locks ( SCHED_NAME VARCHAR(120) NOT NULL, LOCK_NAME VARCHAR(40) NOT NULL, PRIMARY KEY (SCHED_NAME,LOCK_NAME) ); create index idx_qrtz_j_req_recovery on qrtz_job_details(SCHED_NAME,REQUESTS_RECOVERY); create index idx_qrtz_j_grp on qrtz_job_details(SCHED_NAME,JOB_GROUP); create index idx_qrtz_t_j on qrtz_triggers(SCHED_NAME,JOB_NAME,JOB_GROUP); create index idx_qrtz_t_jg on qrtz_triggers(SCHED_NAME,JOB_GROUP); create index idx_qrtz_t_c on qrtz_triggers(SCHED_NAME,CALENDAR_NAME); create index idx_qrtz_t_g on qrtz_triggers(SCHED_NAME,TRIGGER_GROUP); create index idx_qrtz_t_state on qrtz_triggers(SCHED_NAME,TRIGGER_STATE); create index idx_qrtz_t_n_state on qrtz_triggers(SCHED_NAME,TRIGGER_NAME,TRIGGER_GROUP,TRIGGER_STATE); create index idx_qrtz_t_n_g_state on qrtz_triggers(SCHED_NAME,TRIGGER_GROUP,TRIGGER_STATE); create index idx_qrtz_t_next_fire_time on qrtz_triggers(SCHED_NAME,NEXT_FIRE_TIME); create index idx_qrtz_t_nft_st on qrtz_triggers(SCHED_NAME,TRIGGER_STATE,NEXT_FIRE_TIME); create index idx_qrtz_t_nft_misfire on qrtz_triggers(SCHED_NAME,MISFIRE_INSTR,NEXT_FIRE_TIME); create index idx_qrtz_t_nft_st_misfire on qrtz_triggers(SCHED_NAME,MISFIRE_INSTR,NEXT_FIRE_TIME,TRIGGER_STATE); create index idx_qrtz_t_nft_st_misfire_grp on qrtz_triggers(SCHED_NAME,MISFIRE_INSTR,NEXT_FIRE_TIME,TRIGGER_GROUP,TRIGGER_STATE); create index idx_qrtz_ft_trig_inst_name on qrtz_fired_triggers(SCHED_NAME,INSTANCE_NAME); create index idx_qrtz_ft_inst_job_req_rcvry on qrtz_fired_triggers(SCHED_NAME,INSTANCE_NAME,REQUESTS_RECOVERY); create index idx_qrtz_ft_j_g on qrtz_fired_triggers(SCHED_NAME,JOB_NAME,JOB_GROUP); create index idx_qrtz_ft_jg on qrtz_fired_triggers(SCHED_NAME,JOB_GROUP); create index idx_qrtz_ft_t_g on qrtz_fired_triggers(SCHED_NAME,TRIGGER_NAME,TRIGGER_GROUP); create index idx_qrtz_ft_tg on qrtz_fired_triggers(SCHED_NAME,TRIGGER_GROUP); commit;- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

- 90

- 91

- 92

- 93

- 94

- 95

- 96

- 97

- 98

- 99

- 100

- 101

- 102

- 103

- 104

- 105

- 106

- 107

- 108

- 109

- 110

- 111

- 112

- 113

- 114

- 115

- 116

- 117

- 118

- 119

- 120

- 121

- 122

- 123

- 124

- 125

- 126

- 127

- 128

- 129

- 130

- 131

- 132

- 133

- 134

- 135

- 136

- 137

- 138

- 139

- 140

- 141

- 142

- 143

- 144

- 145

- 146

- 147

- 148

- 149

- 150

- 151

- 152

- 153

- 154

- 155

- 156

- 157

- 158

- 159

- 160

- 161

- 162

- 163

- 164

- 165

- 166

- 167

- 168

- 169

- 170

- 171

- 172

- 173

- 174

- 175

- 176

- 177

- 178

- 179

- 180

- 181

- 182

- 183

- 184

- 185

- 186

- 187

- 188

- 189

- 190

- 191

- 192

- 193

- 194

- 195

- 196

- 197

- 198

- 199

- 200

- 201

- 202

- 203

Hadoop

- 进到安装目录,修改core-site.xml

cd /home/xxxx/software/hadoop/etc/hadoop

- 添加配置

/apache/hadoop/bin/hdfs namenode -format

/apache/hadoop/sbin/start-dfs.sh

/apache/hadoop/sbin/stop-dfs.sh

/apache/hadoop/bin/hdfs namenode -format

Hive

- Copy hive/conf/hive-site.xml.template to hive/conf/hive-site.xml

配置如下 - 启动

/apache/hive/bin/hive --service metastore

<configuration> <property> <name>hive.exec.local.scratchdir</name> <value>/apache/tmp/hive</value> <description>Local scratch space for Hive jobs</description> </property> <property> <name>hive.downloaded.resources.dir</name> <value>/apache/tmp/hive/${hive.session.id}_resources</value> <description>Temporary local directory for added resources in the remote file system.</description> </property> <property> </property> <property> <name>hive.metastore.uris</name> <value>thrift://127.0.0.1:9083</value> <description>Thrift URI for the remote metastore.</description> </property> <property> </property> <property> <name>javax.jdo.option.ConnectionPassword</name> <value>secret</value> <description>password to use against metastore database</description> </property> <property> </property> <property> <name>javax.jdo.option.ConnectionURL</name> <value>jdbc:mysql://127.0.0.1/quartz?ssl=false</value> <description> JDBC connect string for a JDBC metastore. To use SSL to encrypt/authenticate the connection, provide database-specific SSL flag in the connection URL. </description> </property> <property> <name>javax.jdo.option.ConnectionDriverName</name> <value>org.mysql.Driver</value> <description>Driver class name for a JDBC metastore</description> </property> <property> </property> <property> <name>javax.jdo.option.ConnectionUserName</name> <value>king</value> <description>Username to use against metastore database</description> </property> <property> </property> <property> <name>hive.querylog.location</name> <value>/apache/tmp/hive</value> <description>Location of Hive run time structured log file</description> </property> <property> </property> <property> <name>hive.server2.logging.operation.log.location</name> <value>/apache/tmp/hive/operation_logs</value> </property> </configuration>- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

Spark

- 复制conf

cp spark-default.conf.template spark-default.conf

cp spark-env.sh.template spark-env.sh - 修改配置

- 启动

cp /apache/hive/conf/hive-site.xml /apache/spark/conf/ - start master and slave nodes

/apache/spark/sbin/start-master.sh

/apache/spark/sbin/start-slave.sh spark://localhost:7077 - stop master and slave nodes

/apache/spark/sbin/stop-slaves.sh

/apache/spark/sbin/stop-master.sh

/apache/spark/sbin/stop-all.sh

spark-default.conf spark.master yarn-cluster spark.serializer org.apache.spark.serializer.KryoSerializer spark.yarn.jars hdfs:///home/spark_lib/* spark.yarn.dist.files hdfs:///home/spark_conf/hive-site.xml spark.sql.broadcastTimeout 500 ==================================== spark-env.sh HADOOP_CONF_DIR=$HADOOP_HOME/etc/hadoop SPARK_MASTER_HOST=localhost SPARK_MASTER_PORT=7077 SPARK_MASTER_WEBUI_PORT=8082 SPARK_LOCAL_IP=localhost SPARK_PID_DIR=/apache/pids- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

livy

- 进到安装目录,复制conf

cp livy.conf.template livy.template - 添加配置

livy.server.host = 127.0.0.1

livy.spark.master = yarn

livy.spark.deployMode = cluster

livy.repl.enableHiveContext = true

livy.server.port 8998 - 启动

livy-server start

4.Docker install

上面的步骤进行的还顺利吗。我信你个鬼。反正我是没成功。有docker干嘛不用

linux instlal docker: https://blog.csdn.net/weixin_43916074/article/details/125265520.- 安装docker,docker compose

请看上面的链接 - 干就完了

sysctl -w vm.max_map_count=262144 - install

docker pull apachegriffin/griffin_spark2:0.3.0

docker pull apachegriffin/elasticsearch

docker pull apachegriffin/kafka

docker pull zookeeper:3.5 - install

docker pull registry.docker-cn.com/apachegriffin/griffin_spark2:0.3.0

docker pull registry.docker-cn.com/apachegriffin/elasticsearch

docker pull registry.docker-cn.com/apachegriffin/kafka

docker pull zookeeper:3.5 - 配置文件docker-compose-batch.yml

#Licensed to the Apache Software Foundation (ASF) under one #or more contributor license agreements. See the NOTICE file #distributed with this work for additional information #regarding copyright ownership. The ASF licenses this file #to you under the Apache License, Version 2.0 (the #"License"); you may not use this file except in compliance #with the License. You may obtain a copy of the License at # # http://www.apache.org/licenses/LICENSE-2.0 # #Unless required by applicable law or agreed to in writing, #software distributed under the License is distributed on an #"AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY #KIND, either express or implied. See the License for the #specific language governing permissions and limitations #under the License. griffin: image: apachegriffin/griffin_spark2:0.3.0 hostname: griffin links: - es environment: ES_HOSTNAME: es volumes: - /var/lib/mysql ports: - 32122:2122 - 38088:8088 # yarn rm web ui - 33306:3306 # mysql - 35432:5432 # postgres - 38042:8042 # yarn nm web ui - 39083:9083 # hive-metastore - 38998:8998 # livy - 38080:8080 # griffin ui tty: true container_name: griffin es: image: apachegriffin/elasticsearch hostname: es ports: - 39200:9200 - 39300:9300 container_name: es- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 进入配置文件目录,启动

docker-compose -f docker-compose-batch.yml up -d - 人生苦短,我用docker

4.Error

5.GodFather

我是个迷信的人,若是他不幸发生意外,或被警察开枪打死,或在牢里上吊,或是他被闪电击中,那我会怪罪这个房间里的每一个人,到那时候我就不会再客气了…

Open Source Data Quality Tool

DataSphereStudio Website: https://github.com/WeBankFinTech/DataSphereStudio/blob/master/README-ZH.md.

DataSphereStudio Website: https://github.com/WeBankFinTech/DataSphereStudio-Doc/blob/main/zh_CN/install.md.

-

相关阅读:

PowerCLi 一键批量部署OVA 到esxi 7

带权并查集模板

nodejs+vue+elementui通用在线新闻发布网

四嗪-Methyltetrazine-PEG4-NH-Boc/PEG9-acid/PEG8-amine HCl salt/Sulfo-NHS ester性质

农林种植类VR虚拟仿真实验教学整体解决方案

java 基础语法

【nlp】天池学习赛-新闻文本分类-机器学习

数字集成电路设计(四、Verilog HDL数字逻辑设计方法)(三)

区块链技术如何对抗疫情

计算机毕业设计(附源码)python元江特色农产品售卖平台

- 原文地址:https://blog.csdn.net/weixin_43916074/article/details/126482222